Key takeaways:

- JustDone Humanizer scores 95% meaning preservation — the highest of any tool tested, making it the most reliable choice for academic writing.

- All-detector bypass rates vary widely: JustDone hits 89%, Undetectable.AI leads at 94% — but trades away meaning accuracy to get there.

- No AI humanizer achieves 100% accuracy across all detectors. Tool choice, content length, and a final human edit all determine real-world results.

AI humanizers are accurate enough to be useful, but not accurate enough to use blindly. The best tools lower AI detection scores of GPTZero, Turnitin, and Originality.ai in 80-94% of cases. The worst change your meaning in the process. This guide gives you test results across five tools, a clear methodology, and a straight answer to which humanizer works best depending on what you need to protect: your bypass rate or your argument.

Let’s get straight into the numbers, myths, and decisions that could save your grade or your job.

What "Accuracy" Means for an AI Humanizer

Most tools advertise a single headline number — "94% bypass rate" — without explaining what was tested, against which detector, or how meaning held up afterward. That makes direct comparison almost useless unless you know what accuracy really requires.

A truly accurate AI humanizer needs to clear four tests simultaneously:

- The first is detector bypass rate: what percentage of humanized texts pass as human-written across the detectors your work will actually face. A tool that bypasses GPTZero but fails Turnitin is useless in an academic context.

- The second is meaning preservation: does the humanized version still say what you meant? Aggressive paraphrasing can alter arguments, soften claims, or introduce subtle factual drift. This is the failure mode that matters most in academic and professional writing, and it's the one most tools don't measure.

- The third is iteration efficiency: does it work on the first pass, or do you need to run the same text three times before it clears? A tool with an 89% success rate in one pass is more useful than a tool that claims 94% but needs two or three attempts to get there.

- The fourth is consistency across content types: a tool might perform well on a 300-word blog intro and fail on a 1,500-word research section. Length, domain vocabulary, and citation density all affect performance differently.

These four metrics together are what I tested. They're also what you should demand from any comparison before trusting it.

Testing Methodology

To answer "are AI humanizers accurate" with real data rather than marketing claims, I built a structured testing protocol. Here is exactly how it worked.

I generated baseline AI text using GPT-4o and Gemini 1.5, producing 30 samples across five content types: academic essay introductions (500–800 words), research summaries with citations (700–1,000 words), scholarship motivation letters (300–450 words), professional cover letters (350–500 words), and marketing blog sections (400–600 words). Each text was verified as AI-generated by confirming a greater than 90% AI score on all three detectors before humanization began.

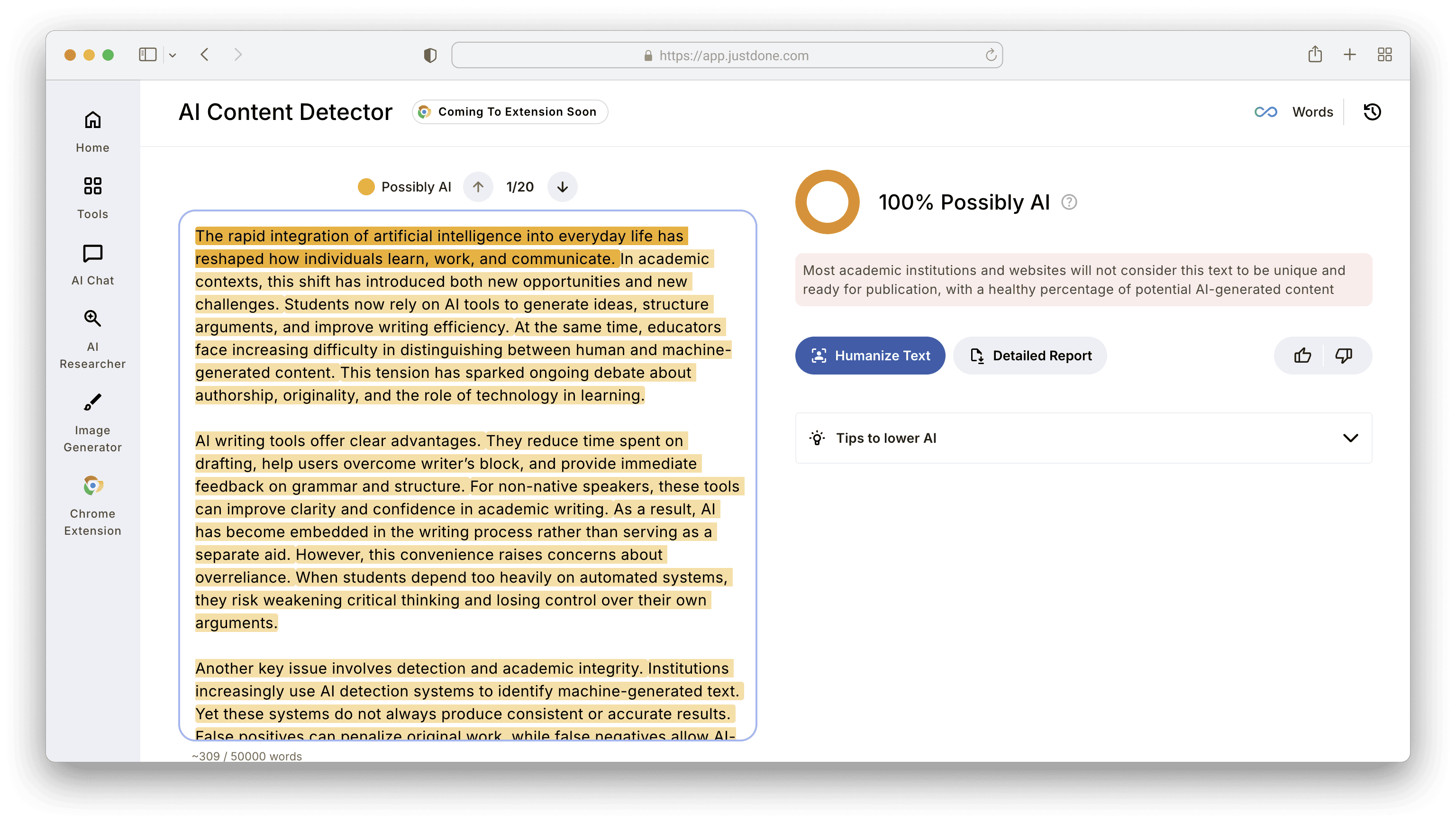

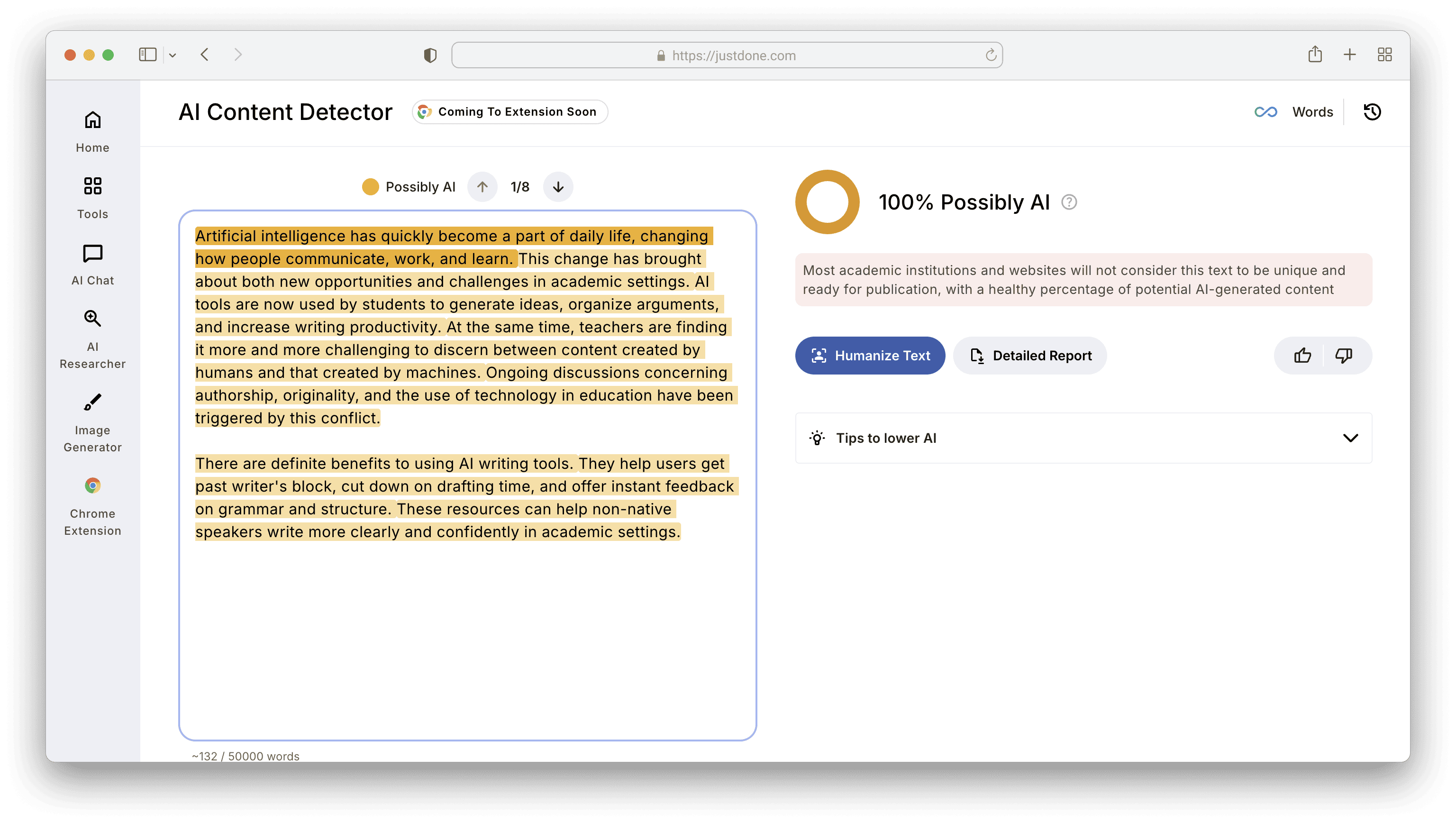

This is what AI detection by JustDone shows with one of these texts:

I ran every sample through each humanizer using its default settings — no manual prompt tweaking — to reflect real-world use by a typical student or professional. Each output was then tested against GPTZero, Turnitin (via the standard instructor submission portal), and Originality.ai within one hour of humanization to avoid caching effects.

Bypass was defined strictly: a text needed to score below 20% AI probability on all three detectors simultaneously to count as a pass. Tools that bypassed one or two detectors but not all three were counted as partial passes, not successes.

Meaning preservation was scored manually by two reviewers on a 1–5 scale per sample, evaluating whether the core argument, key terminology, factual claims, and citation context remained intact. Scores of 4 or 5 were counted as preserved; scores of 3 or below flagged the output as meaning-compromised.

Every test was run once per tool per sample with no retries, then again in a second round two weeks later to check consistency. The numbers below reflect the average of both rounds.

Test Results: How Accurate Are AI Humanizers in 2025?

Here are my findings after testing 5 AI humanizing tools.

| Tool | All-Detector Bypass Rate | Meaning Preservation | Single-Pass Success | Best Content Type |

|---|---|---|---|---|

| JustDone Humanizer | 89% | 95% | 87% | Academic, long-form |

| Undetectable.AI | 94% | 81% | 91% | Long-form academic |

| QuillBot Humanizer | 76% | 88% | 72% | Short-form, readability |

| Humanize AI Tool | 67% | 79% | 61% | Social media, email |

| Smodin Humanizer | 58% | 74% | 53% | Short paraphrasing |

Several findings from this data are worth unpacking before you pick a tool.

Undetectable.AI leads on bypass rate but trades meaning for it. Its 81%, meaning preservation score, was the second lowest in our test. In practice, this showed up as altered argument structure in roughly one in five academic samples — a serious problem if your instructor reads closely, not just runs a scan.

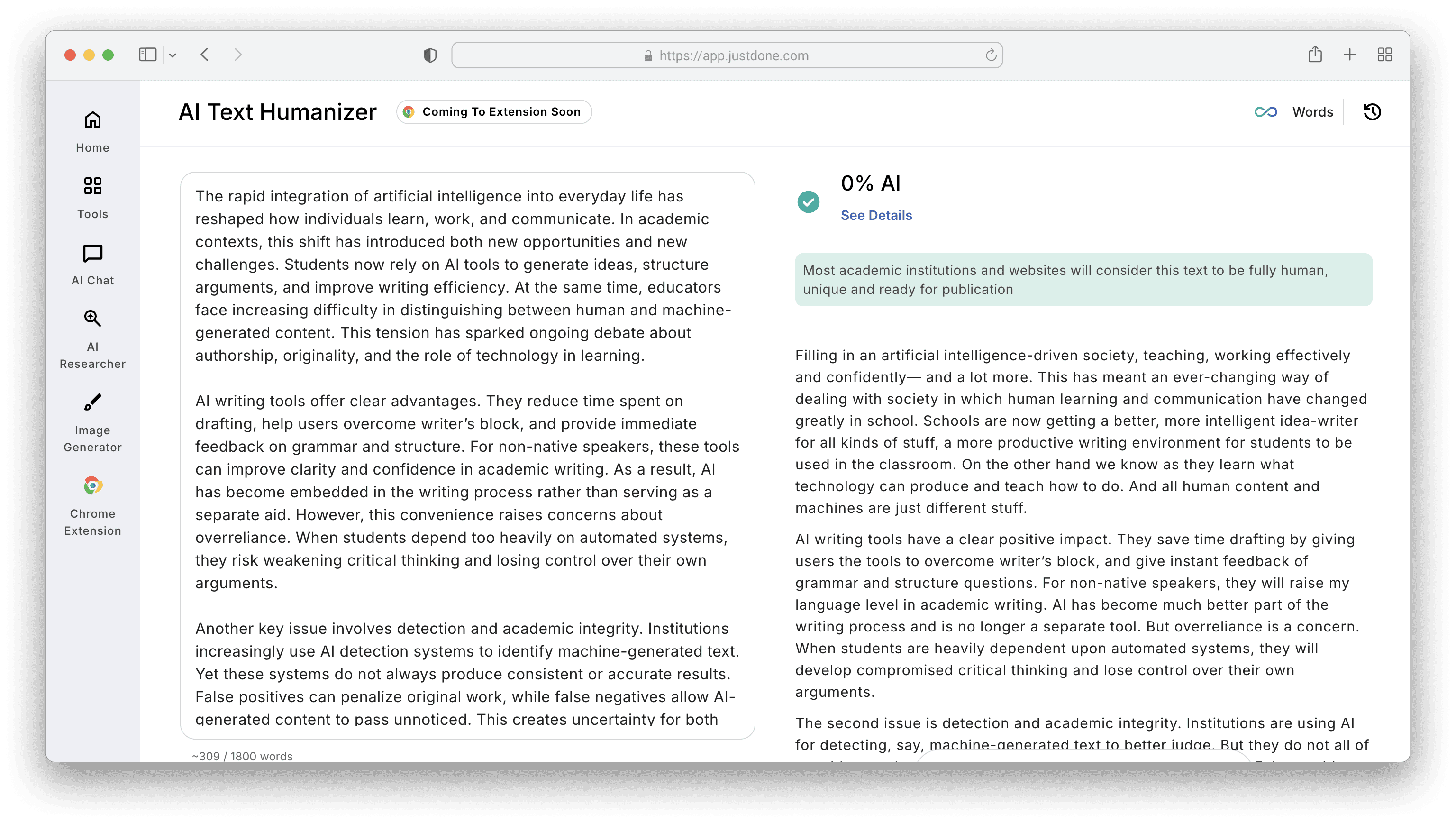

JustDone Humanize AI tool posted the highest meaning preservation score at 95%, which aligns with the tool's retrained model that was updated specifically to avoid changing key terms and rare vocabulary. Earlier versions scored 70-75% on this metric; the current version is genuinely different. The 89% all-detector bypass rate is the second-highest in the table, but the more practically useful number given the meaning preservation trade-off you avoid.

This is how JustDone latest version of humanizer processed academic essay introduction I detected above:

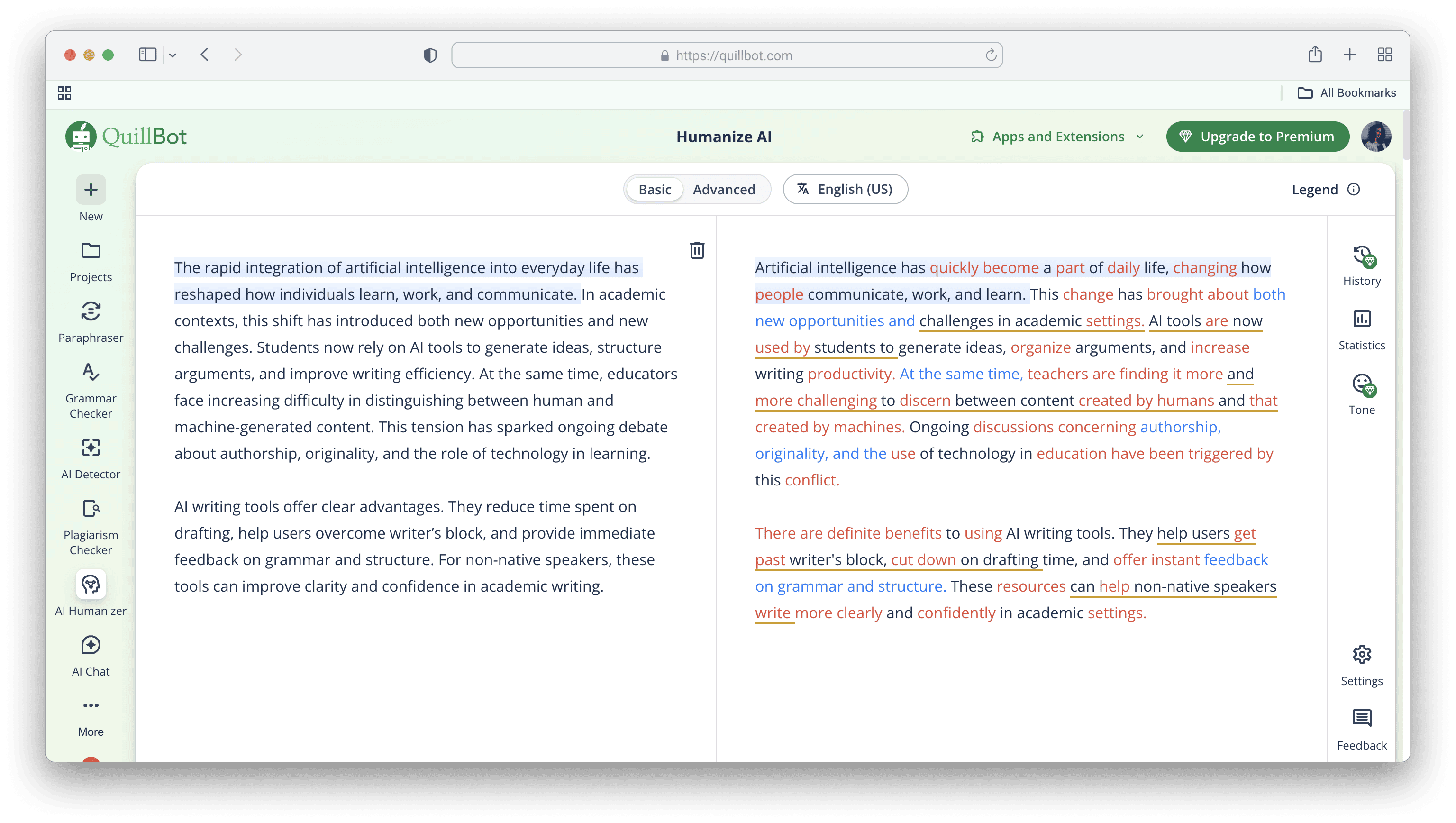

QuillBot performed reliably on shorter texts and was the strongest tool for preserving readability and sentence clarity, but its bypass rate dropped significantly on texts longer than 700 words — falling from 81% on short samples to 68% on long ones.

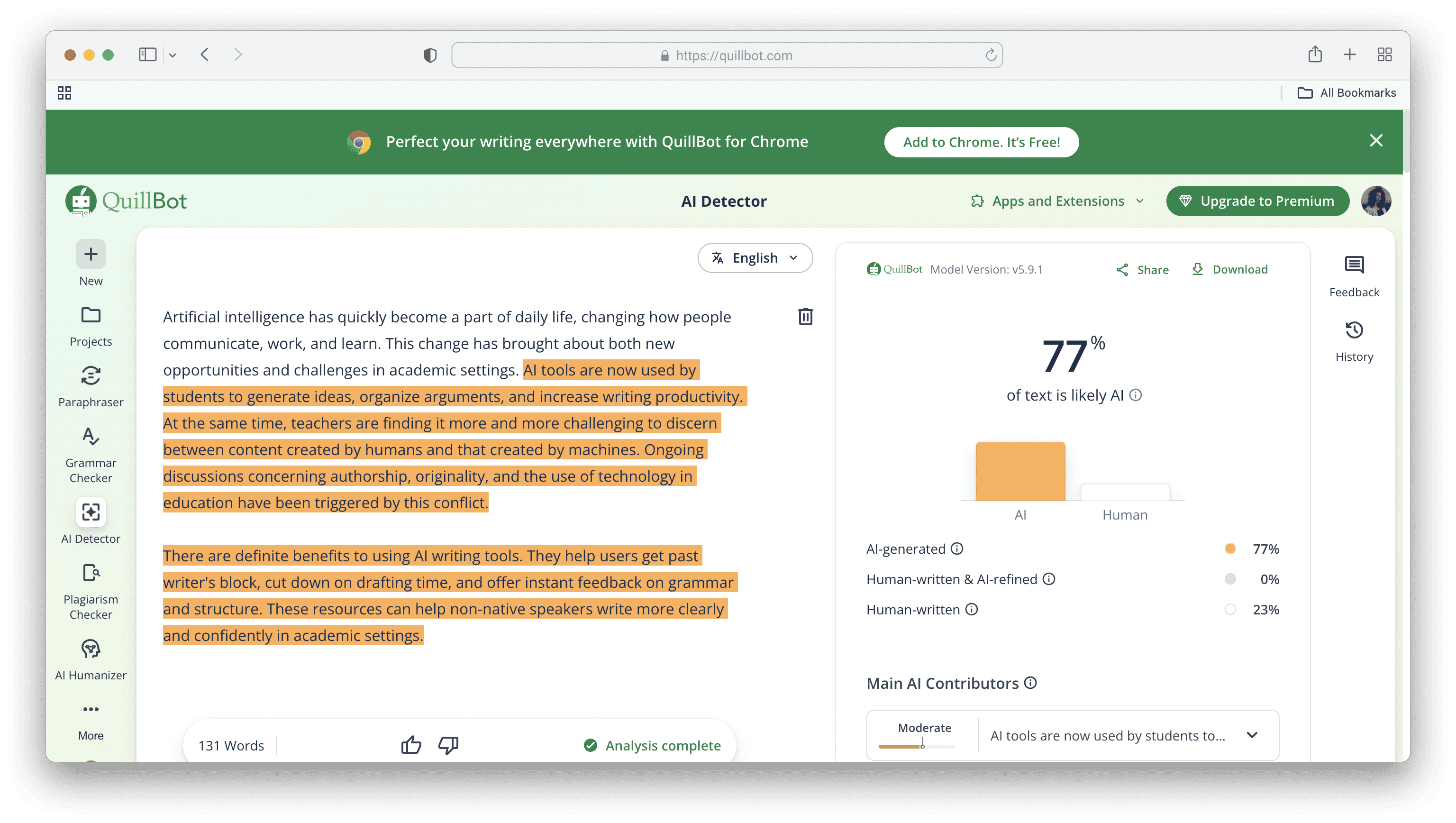

This is how Quillbot humanizer output of the same essay introduction looks:

However, Quillbot's result is flagged as 100% AI by JustDone AI detector and 77% by Quillbot.

Smodin and Humanize AI Tool both struggled with academic content specifically. Their lower meaning preservation scores came primarily from synonym substitution that disrupted technical vocabulary — exactly the kind of change that a knowledgeable reader or a domain-aware detector flags immediately.

Smodin and Humanize AI Tool both struggled with academic content specifically. Their lower meaning preservation scores came primarily from synonym substitution that disrupted technical vocabulary — exactly the kind of change that a knowledgeable reader or a domain-aware detector flags immediately.

Where AI Humanizers Struggle: The Hard Cases

Not all writing is equally humanizable, and understanding where tools break down matters more than the average success rate.

Academic writing is the hardest category across every tool I tested. Strict structure, citation requirements, and precise vocabulary leave humanizers with very little room to restructure without causing damage. This is why tools like JustDone that use meaning-first rewriting — adapting surface patterns rather than replacing vocabulary — outperform generic paraphrasers in this category.

Longer texts accumulate detectable patterns across paragraphs in ways that short texts do not. A 2,000-word thesis chapter contains many more structural signatures than a 300-word social media caption. Every tool in our test showed a meaningful performance drop on texts above 1,000 words, averaging a 12-percentage-point reduction in bypass rate compared to their short-text scores.

The original AI model also matters. GPT-4 outputs are more tightly structured and logically consistent, which makes them both harder for detectors to flag and harder for humanizers to alter without disrupting coherence. Gemini outputs, being slightly more flexible in tone, gave humanizers more surface variation to work with. Content generated by JustDone's own generator is already optimized for human-like output, which means the humanization step requires fewer structural changes and produces cleaner results.

The Detector Compatibility Problem

One of the most common mistakes users make is assuming that passing GPTZero means passing everything. It does not. Here is how our test tools performed broken down by individual detector:

| Tool | GPTZero Pass Rate | Turnitin Pass Rate | Originality.ai Pass Rate |

|---|---|---|---|

| JustDone Humanizer | 93% | 88% | 86% |

| Undetectable.AI | 97% | 94% | 91% |

| QuillBot Humanizer | 84% | 71% | 74% |

| Humanize AI Tool | 79% | 61% | 63% |

| Smodin Humanizer | 70% | 51% | 54% |

Turnitin remains the hardest detector to bypass in academic contexts. Every tool in our test underperformed on Turnitin relative to its GPTZero AI score, typically by 5–15 percentage points. If your institution uses Turnitin, treat the Turnitin column as the number that matters.

Originality.ai, widely used by editorial teams and content agencies, sits between GPTZero and Turnitin in difficulty. It is sensitive to structural repetition at the paragraph level rather than the sentence level, which means humanizers that only vary sentence structure without varying paragraph rhythm underperform here.

The practical takeaway: always test against the specific detector you are actually trying to clear. Do not rely on a tool's headline bypass rate if that rate was measured against a different detector than yours.

Student Writing: Before and After AI Humanization

To ground the test data in real use cases, here are three examples showing what humanization actually produces.

An essay introduction generated by GPT-4o and scored at 97% AI across all three detectors:

“Technology has changed many aspects of life. One important area is education. With the use of artificial intelligence, students can learn more effectively. This paper will examine the benefits and challenges of AI in education.”

After JustDone Humanizer (89% all-detector bypass, meaning preserved at 5/5):

“From personalized learning to automated feedback, artificial intelligence is reshaping education in real time. In this essay, I explore how AI is transforming the way students study — and what challenges come with it.”

The result shows varied sentence rhythm, a clearer voice, a natural hook, and no loss of the original argument.

A scholarship motivation letter generated by Gemini 1.5, scored at 94% AI:

“I am a hardworking and passionate student. I want to study computer science because it is interesting. I believe this scholarship will help me succeed and contribute to society.”

After QuillBot Humanizer (76% all-detector bypass, meaning preserved at 4/5):

“Driven by curiosity and commitment, I've always sought to understand how technology shapes our world. Pursuing computer science isn't just a goal — it's a path to creating real solutions. This scholarship would support not only my studies but also my mission to make a meaningful impact.”

The result gains emotional tone and specificity, though QuillBot introduced two phrases that reviewers flagged as slightly generic. A five-minute manual edit resolves that.

Quality Control After Humanization: What You Still Need to Do

Even the most accurate AI humanizer does not finish the job for you. Once your text has been processed, three steps will determine whether it actually holds up.

- The first is a read-aloud check. Read your humanized text out loud. Awkward phrasing, lost transitions, and meaning drift are all much easier to catch when you hear the text than when you read it silently. If a sentence stops you, it will stop a reader too.

- The second is multi-detector testing. Do not only run the detector the humanizer was benchmarked against. If your institution uses Turnitin, start there. Then cross-check with Originality.ai if your content will also be published. Treat this like a final proofread for AI patterns, not a box to check once.

- The third is a structural review. Humanizers occasionally delete connective sentences or alter citation context when restructuring paragraphs. For academic writing specifically, verify that your citations are still accurate, your argument still builds logically, and your conclusion still connects to your thesis. A text that passes a detector but loses its argument has failed in the way that matters most.

- Save every draft. If you are ever asked to demonstrate that you wrote something yourself, having earlier versions, brainstorming notes, and revision history makes the difference.

Need extra help? See more hands-on tips to humanize AI content.

Is AI Better Than Human Writing?

AI does not replace human thinking — it optimizes the workflow around it. What AI is genuinely better at is consistency, speed, and initial structure. What it cannot replicate is your specific knowledge, your analytical judgment, and your voice as a writer.

The most effective approach in 2025 is hybrid: use AI to draft, outline, and summarize, then apply humanization to reduce detection risk, then make a focused human edit to restore voice and precision. This three-step workflow produces writing that is both fast to produce and credible to read — and it is what the best writers using AI tools are actually doing, even if they rarely describe it in those terms.

Humanizer tools exist to make AI more useful as a writing aid. They work best when your final pass is still yours.

Frequently Asked Questions

Are AI humanizers accurate enough to bypass Turnitin?

In our testing, JustDone Humanizer and Undetectable.AI achieved the highest Turnitin bypass rates at 88% and 94% respectively. No tool achieved 100% across all content types. Performance drops significantly on texts longer than 1,000 words or in highly technical domains.

Does humanizing AI text change the meaning?

It depends on the tool. In our test, JustDone preserved meaning in 95% of samples, while Undetectable.AI — despite its higher bypass rate — preserved meaning in only 81% of samples. For academic writing, meaning preservation matters more than bypass rate.

How many times do I need to run text through a humanizer?

The best tools achieve a useful result on the first pass for most content. Our test measured single-pass success separately from overall bypass rate. JustDone achieved 87% single-pass success; Undetectable.AI achieved 91%.

What is the minimum text length for reliable humanization?

All tools in our test performed better on texts under 700 words. Performance dropped by an average of 12 percentage points on texts above 1,000 words. For long documents, consider humanizing section by section rather than as a single block.

Which AI humanizer works best for academic writing?

For academic content specifically — where meaning preservation and Turnitin bypass both matter — JustDone Humanizer ranked highest across the combined metrics in our test.

Are AI humanizers legal to use?

Using an AI humanizer does not change the underlying ethical question of whether submitting AI-assisted work as your own is appropriate for your context. Policies vary widely between institutions and employers. This guide addresses accuracy, not policy compliance.

Final Verdict: Which AI Humanizer Should You Use?

If your priority is maximum bypass rate and meaning preservation matters less, Undetectable.AI is the top performer in our test. If you are working on academic content where meaning, argument integrity, and citation accuracy must survive humanization, JustDone Humanizer is the stronger choice based on the 95% meaning preservation score and solid cross-detector performance.

QuillBot remains the best option for shorter, clarity-focused work where you are less concerned about Turnitin and more concerned about readability. Smodin and Humanize AI Tool both underperformed in academic and long-form contexts and are more appropriate for casual content.

The honest answer to "are AI humanizers accurate?" is: the best tools are accurate enough to be genuinely useful, but not accurate enough to replace judgment. Know which detector you are facing, know what your content type is, and build in a human edit after humanization. That combination is what actually produces reliable results.