Key takeaways:

- False positives happen because of patterns, not proof. AI detectors flag structure and rhythm, not intent.

- Stay calm and show evidence. Check with multiple tools and keep drafts or version history to prove authorship.

- Pre-check and revise smartly. Detect AI with JustDone to spot triggers, then vary structure and tone before submitting.

False positives happen when you craft some text on your own, but then an AI detection tool like Turnitin or ZeroGPT flags it as AI-generated. In this article, I’ll walk you through the most common mistakes triggering false positives across leading AI detectors – Turnitin, Grammarly, Copyleaks, GPTZero, and Originality.ai. Besides, I will share practical ways to avoid them.

What to Do if You Were Falsely Accused

No AI detector is 100% accurate. If your assignment was flagged as AI-written, you can prove you didn't use AI with these steps:

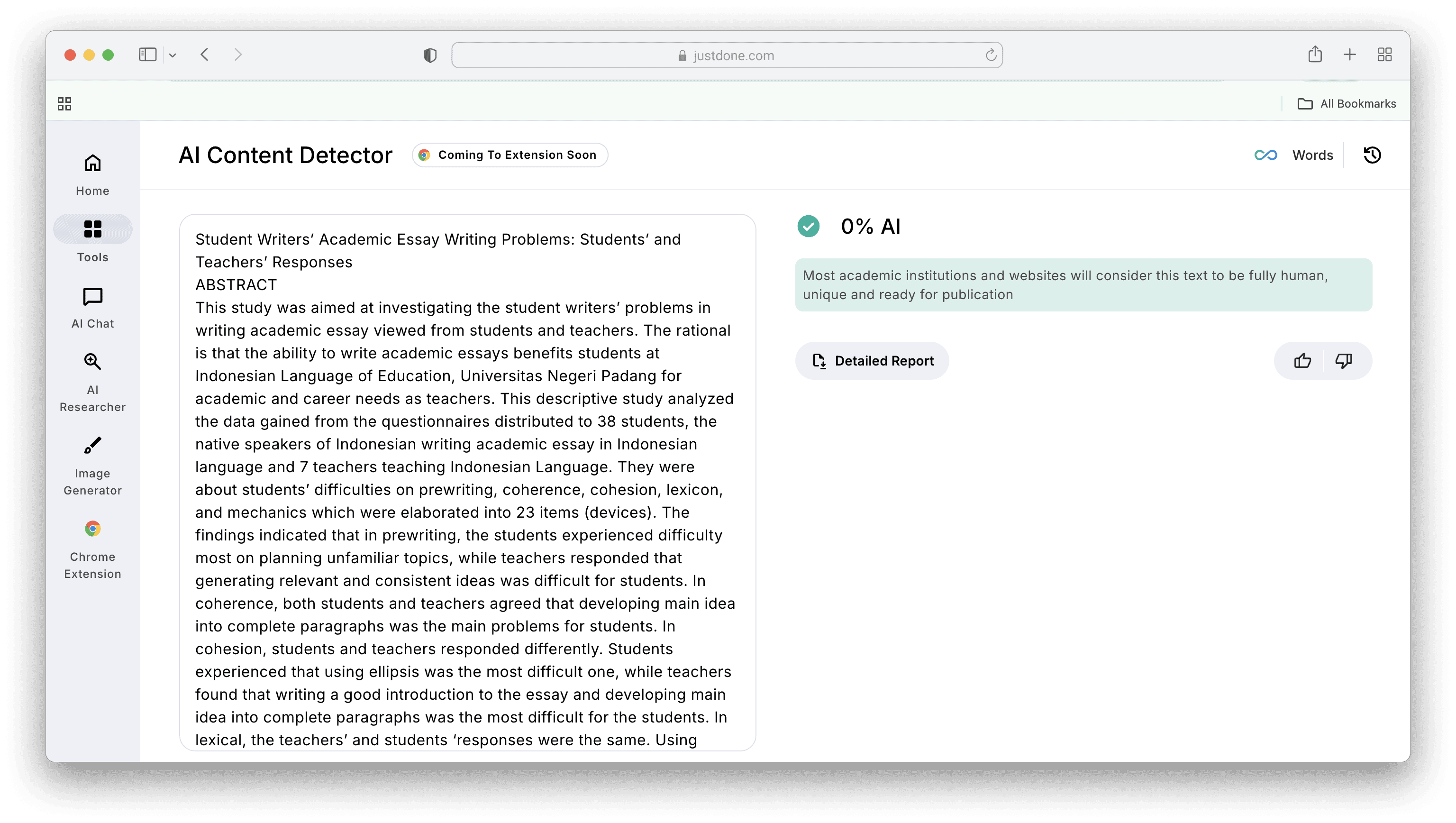

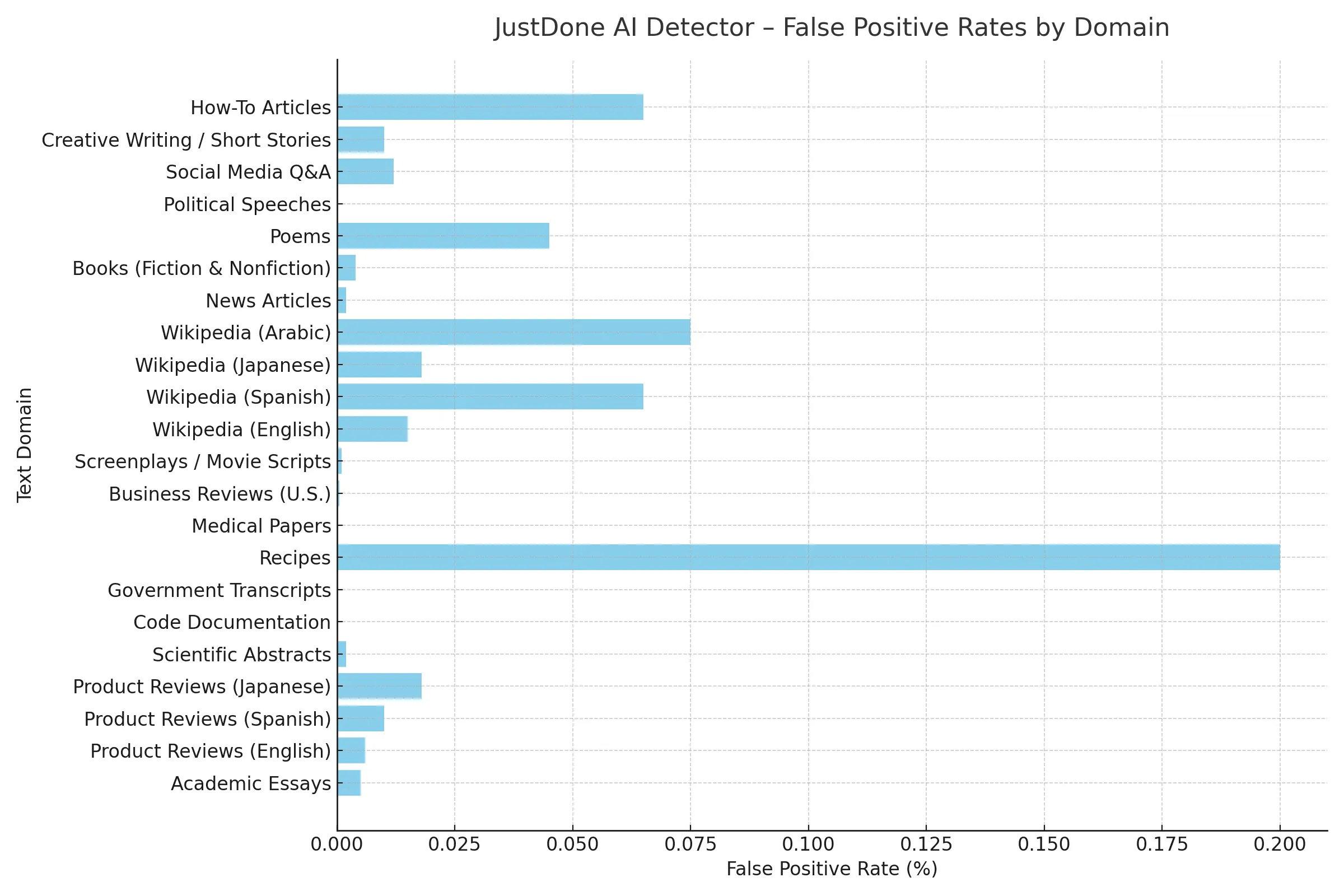

- Try various AI checkers and multiple models to make sure you're clean. Pre-check your work with JustDone's AI Detector, since it is accurate for academic texts and has an extremely low false-positive rate (0,1%-0,2% based on domain). Checking the accuracy, let's detect academic research written before LLM appeared.

- Don't react negatively to the professor's comments. Take your time to respond in the most intelligent way and send an official and polite response to your professor.

- Review the AI usage policy in your academia

- Collect any notes you did when writing, revision history, etc. You may need this to show that you put a lot of effort into the assignment and to further AI detection reliability.

- If you can't resolve an issue with your professor, escalate to your administration. Stand on a fair position.

Why Is My Writing Being Detected as AI?

In many cases, a high AI score is not about copying; it’s about patterns. AI detectors don’t understand meaning. They analyze structure, probability, and language rhythm. If your writing statistically resembles patterns commonly found in AI outputs, the system may flag it, even if you wrote every word yourself.

Here are the most common reasons this happens:

Your writing is too structurally perfect

AI-generated text often has:

- Even paragraph length

- Consistent sentence rhythm

- Balanced sentence structure

- Predictable formatting

If your academic writing is extremely polished and uniform, it can resemble AI output.

Ironically, strong academic writers are sometimes flagged because their work is clean, logical, and well-structured.

You use repetitive transitions

Detectors are sensitive to common AI-style transitions such as "That's where smth. comes in,"

"Here's what that looks like in practice," "The result?" “This is where it gets important," etc.

And that distinction matters more than it might seem. etc. If many paragraphs begin similarly, the structure may look machine-generated. Humans vary their transitions naturally. AI tends to repeat structural cues.

Your Tone Is overly neutral

AI writing often sounds emotionally flat and mechanically formal. If your paper avoids any personal framing, uses purely objective phrasing throughout, or has zero variation in tone, it may resemble AI output statistically. Academic writing should be formal, but it shouldn’t sound robotic.

You edited heavily with AI

This is where confusion happens. Even if you wrote the original draft yourself, heavy AI editing can alter:

- Sentence flow

- Structural rhythm

- Vocabulary patterns

At that point, detectors may classify the document as AI-influenced or hybrid authorship. That is not always a false positive. It may reflect how much AI reshaped the structure.

Your writing matches training data patterns

AI detectors are trained on large datasets. If your topic, phrasing style, or sentence structure closely resembles patterns from training data, it can increase probability scores. This happens often with too standardized essays or frequently written academic topics. Again, this does not mean you cheated. It means your writing statistically overlaps with AI-like patterns.

The key insight from all this is that when an AI detector flags your work, it does not mean you copied or cheated. It means the structure triggers AI probability models, and that distinction matters.

How to Reduce False Positives

AI detection scores can be confusing, so let’s clarify a few important points.

First, understand what the AI score actually means. If a detector shows 60% human and 40% AI, it does not mean 40% of your text was written by AI. It means the system is 60% confident the content is human-written. These tools operate on probability, not certainty.

If AI significantly shaped your text, whether through drafting, heavy editing, or structural rewriting, the result is not necessarily a false positive. Even partial AI involvement can influence sentence rhythm and structure enough for the entire piece to be flagged.

Hybrid writing increases this risk. For example, using AI to:

- Generate an outline

- Rewrite multiple paragraphs

- Optimize structure

- Adjust tone extensively

The more AI reshapes the text, the more detectable its patterns may become.

To reduce risk, keep a clear writing workflow. Draft your content in Google Docs so version history is preserved. Maintain notes, outlines, and research references. This documentation helps demonstrate authorship if questions arise.

Also, avoid unusual formatting or broken structure. Strange spacing, copied formatting from other platforms, or inconsistent layout can sometimes affect detection accuracy.

Length matters too. Very short texts produce less reliable detection results. As a general rule, analyze at least 100 words for a more stable probability estimate.

Most importantly, treat AI detectors as feedback tools, not final judges. Before submitting important work, run it through multiple detectors. AI detection with JustDone highlights specific phrases and structural patterns that may trigger flags, allowing you to revise proactively instead of reacting later.

What is a False Positive

A false positive happens when an AI detector labels fully human-written content as AI-generated, even though no AI was used to create or shape it. However, many cases that people call “false positives” are not actually errors. They are results of hybrid writing workflows where AI played a significant role in drafting or editing.

To understand the difference, here’s how AI detection typically interprets various writing scenarios:

- AI-generated and not edited – Detected as AI-generated

- AI-generated and lightly edited by a human – Still detected as AI-generated

- Human-written but heavily edited with AI tools – Likely detected as AI-generated

- AI-created outline and human draft and heavy AI editing – Likely detected as AI-generated

- Human-written with light AI grammar or clarity edits – Result depends on how much structure AI changed

- AI-assisted research but fully human-written text – Usually detected as human-generated

- Fully human-written and human-edited – Detected as human-generated

An important detail: using AI to create an outline, suggest structure, or generate early drafts can influence the final writing patterns. Even if a person writes most of the content afterward, structural fingerprints from AI may remain.

For example, if AI drafts a paper and a student carefully edits it afterward, the text may still be detected as AI-influenced. That is not necessarily a false positive, but it reflects the level of AI involvement.

A false positive means no AI shaped the content, yet it was flagged. Hybrid writing, where AI meaningfully influenced structure or phrasing, is usually not considered a detection error.

False Positives on AI Detectors: Comparative Analysis

AI detectors do not analyze the meaning of your text. They analyze statistical patterns in language, based on burstiness and perplexity. That’s why even well-written human work can sometimes be flagged as AI. Below is a snapshot of how common AI detectors perform on false positives (human text incorrectly flagged as AI) based on independent tests:

| Tool | Approx. False Positive Rate | What Often Triggers False Positives | Typical Use Case |

|---|---|---|---|

| Turnitin AI | < 1% claim (controlled) | Formal academic tone, balanced structure | Academic writing and LMS workflows |

| GPTZero | approx. 0.24% (benchmark test) | Polished grammar, repetitive structure | Student essays and reports |

| Copyleaks AI | approx. 5% (benchmark) | Common phrases, repetitive SEO language | Essays, web content, multilingual texts |

| Originality.ai | approx. 4.8% (benchmark) | Repetitive transitions and passive voice | Marketing and long-form content |

False positive AI detection results depend on the domain. Based on the domain, JustDone's AI checker demonstrates a false-positive rate between 0,02% and 0,2%.

This allows us to consider AI detection with this tool as accurate and reliable for learners.

What False-Positive Scores Really Mean for Learners

A low false positive rate (like Turnitin’s <1% claim) sounds reassuring, but it’s based on controlled tests, not all real writing styles. In real academic use, rates can be higher because:

- Detectors are more likely to flag structure than intent

- Paraphrased or hybrid (both human and AI) writing mixes human and AI features

- Short texts (<100-150 words) are inherently less reliable to classify

This is why educators treat AI scores as a signal, not a final verdict.

Final Thoughts on AI False Positive Results

AI detection false positives happen, and they can create real stress for students, writers, and professionals. But they’re not verdicts; consider them as nudges instead. I recommend mixing your sentence structure, injecting your experiences, treading carefully with automated edits, and checking with multiple tools. When your writing sounds human, you’ll see those false alarms drop. And as frustrating as they can be, these moments are just reminders to lean into your unique voice.

Readable, human writing should not be an accident, because it is still the intentional requirement of any academic. Detectors don’t know you, so they don’t see your thought process. When your work reflects you, they usually see that too.

So the next time a detector flags your essay, don’t overreact. Read it back, ask yourself if it sounds like you, and tweak until it does.

F.A.Q.

What does a false positive mean?

A false positive means an AI detector incorrectly labels human-written text as AI-generated. It does not mean you cheated, but it means the system detected structural or statistical patterns that trigger AI writing.

What is a false negative?

A false negative is the opposite of a false positive. It happens when AI-generated content is mistakenly classified as human-written. This shows that AI detectors are not perfect and rely on probability, not certainty.

Is a false positive good or bad?

A false positive is problematic because it can create stress and confusion, especially in academic settings. However, it is not a final verdict. Most institutions treat AI detection scores as indicators, not automatic proof of misconduct.

Can you get a false positive rate after detection?

Yes. Even fully human-written work can trigger a false positive, especially if it has very uniform structure, repetitive transitions, overly polished grammar, or heavy AI-based editing.

Why is my writing being detected as AI if I wrote it myself?

AI detectors analyze patterns such as sentence rhythm, predictability, and structural consistency. If your writing resembles patterns commonly found in AI outputs, it may be flagged, even if you wrote every word yourself.

How accurate are AI detectors?

AI detection accuracy varies by tool, domain, and text length. Most detectors operate on probability models, meaning they estimate likelihood rather than prove authorship. Short texts and heavily edited drafts tend to produce less reliable results.