Key takeaways:

- 10.3% error rate - JustDone has one of the lowest recorded among AI detection tools, meaning fewer false accusations against genuine human writers.

- 100% accuracy on scientific and news text — Correctly classifies ArXiv papers and journalism articles, the domains most prone to misdetection.

- Detects hybrid writing too — Correctly flags 60% of AI-edited human drafts, going beyond pure AI detection into how people actually write today.

The result of testing shows that JustDone AI Detector achieves almost 100% AI detection accuracy for academic and scientific papers with a 10.3% error rate. It is the lowest error rate among major AI detection tools tested, including GPTZero (20.5% error rate) and Copyleaks (23.7% error rate).

Not all AI detectors are equally reliable. A tool with a high false positive rate can flag genuine human writing as AI-generated, causing serious consequences for writers and students.

This guide answers the question directly: How accurate is the JustDone AI Detector? It covers the testing methodology, benchmark datasets, performance metrics, and how JustDone compares with the most widely used alternatives.

What Is JustDone AI Detector?

AI detection tool from JustDone is an AI content checker that classifies text as human-written or AI-generated. It is designed for use in academic integrity reviews, editorial workflows, and content verification.

The detector analyzes linguistic signals including:

- Sentence rhythm and structural repetition

- Probability distributions characteristic of large language models (LLMs)

- Inconsistencies typical of hybrid AI-human writing

- Stylistic markers that vary between human authors and AI systems

The system runs a dual-model architecture trained on over one million text samples, covering outputs from GPT-4, Claude, Gemini, and other major LLMs.

JustDone AI Detector Accuracy: Key Results

The latest model version of JustDone AI checker was independently evaluated against human-written and AI-generated text across multiple domains.

| Metric | result |

| Ai detection accuracy (pure AI text) | 80% |

| Total error rate | 10.3% |

| Full correct classification rate | 67.5% |

| Partial identification of hybrid text | 22.5% |

| Minimum text length for reliable results | 350 characters |

Why the error rate matters more than detection rate alone: A detector that flags everything as AI-generated will achieve a high detection rate, but at the cost of falsely accusing human writers. The error rate captures both false positives and false negatives, making it the most honest single-number measure of real-world reliability.

How JustDone Tests AI Detector: Methodology

Accuracy claims are only meaningful with transparent testing. JustDone's evaluation follows a structured framework aligned with industry-standard AI detection testing practices.

Key methodology principles:

- Independent test sets: Evaluation datasets are fully separate from training data, preventing data leakage and inflated scores.

- Dual-category text sampling: Each test includes verified human-written text alongside AI-generated content from multiple LLMs.

- Domain diversity: Test texts are sourced from academic, journalistic, technical, and web publishing contexts to simulate real-world variety.

- Production API testing: The detector is evaluated using the same API endpoint available to end users, not a stripped-down lab version.

- Error analysis loop: Misclassifications are logged, categorized, and analyzed through root cause analysis, feeding directly into model improvement cycles.

This methodology reduces the gap between benchmark performance and real-world results — a gap that has historically made published accuracy figures from AI detectors misleading.

Datasets Used in JustDone AI Detection Testing

When testing AI detection possibilities by JustDone, three types of sources were used: human-written texts, AI-generated texts, and mixed texts.

Human-Written Text Sources

To ensure authentic human writing, test samples are drawn from:

- Peer-reviewed academic papers (pre-2022, before widespread generative AI adoption)

- News and journalism articles

- Student essays and coursework

- Technical and medical publications

- Editorial and long-form blog content

Why pre-2022 papers matter: Scientific writing published before ChatGPT's release guarantees no AI involvement, eliminating the ambiguity that plagues newer text samples.

AI-Generated Text Sources

AI test samples include outputs from:

- GPT-3.5 and GPT-4 family models

- Claude (Anthropic)

- Gemini (Google)

- Additional open-source and commercial LLMs

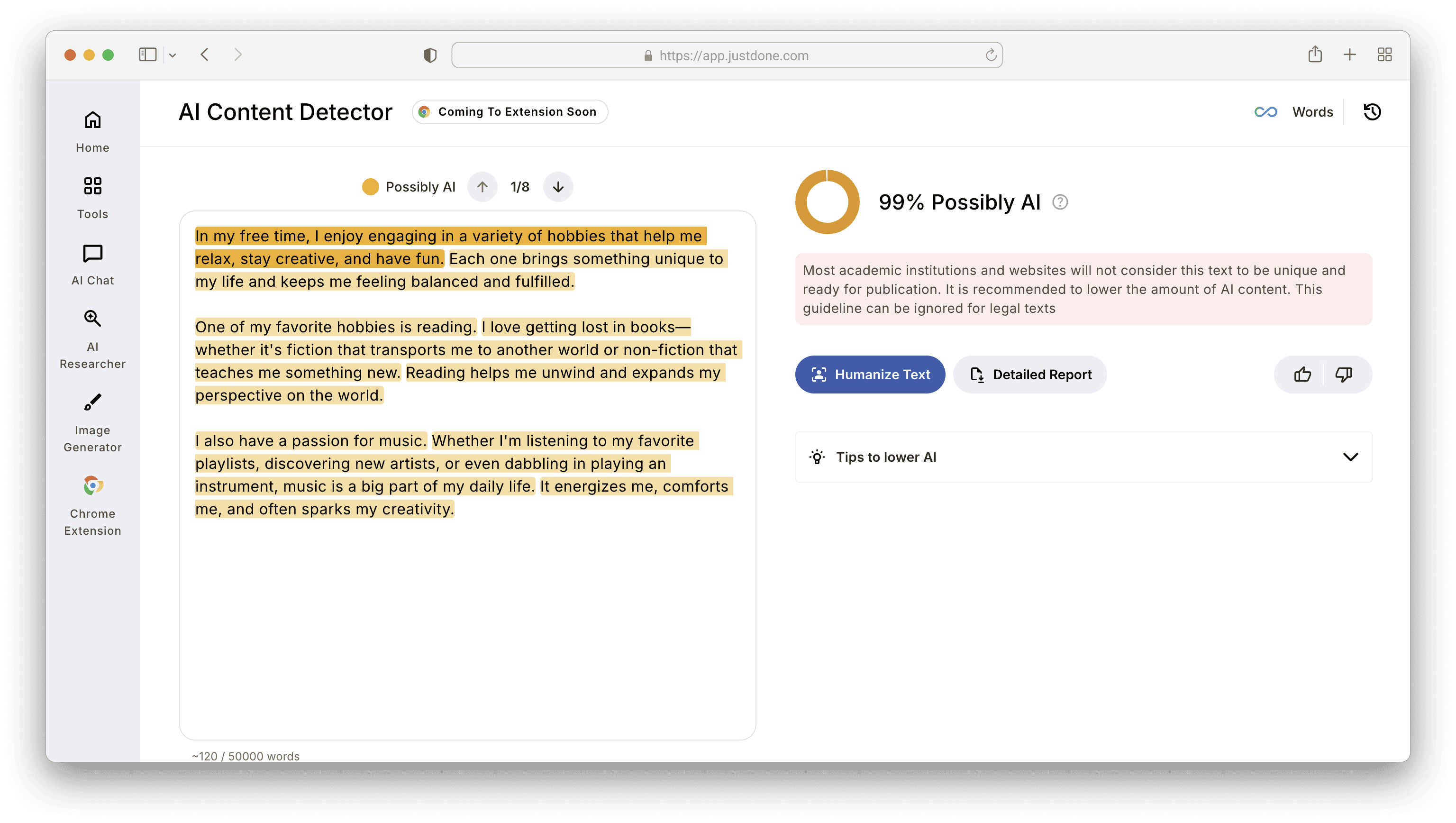

For instance, here is the result of AI detection of GPT-4 generation:

Critically, the dataset includes both raw AI output and edited AI drafts, reflecting how most people actually use AI writing tools.

Which Metrics Measure AI Detection Accuracy?

Understanding the metrics behind an AI detector helps you interpret its results correctly.

Confusion Matrix (The Foundation)

Every AI detection result falls into one of four categories:

| Outcome | Meaning |

| Truw Positive (TP) | AI text correctly flagged as AI |

| True Negative (TN) | Human text correctly cleared as human |

| False Positive (FP) | Human text incorrectly flagged as AI |

| False Negative (FN) | AI text incorrectly cleared as human |

False positives are the most damaging error type in academic and editorial contexts. They create unfounded accusations against human writers.

Key Performance Metrics

Overall accuracy is the percentage of all texts correctly classified. It is useful as a headline figure but insufficient alone.

True Positive Rate (AI Detection Rate) is how reliably the detector catches AI content. Higher is better for screening purposes.

True Negative Rate (Human Text Accuracy) means how reliably the detector clears genuine human writing. Critical for avoiding false accusations.

F-Beta Score balances precision and recall. AI detection systems typically tune this score toward precision (reducing false positives) rather than recall.

JustDone Performance on Academic and Technical Writing

Academic writing is one of the hardest domains for AI detectors. Scientific prose uses structured sentences, disciplined repetition, and formal phrasing — patterns that many detectors incorrectly flag as AI-generated.

JustDone was benchmarked across three specialist datasets:

| Dataset | Accuracy |

| ArXiv scientific papers | 100% |

| News articles | 100% |

| PubMed technical content | 83.3% |

These results are particularly relevant for:

- Academic integrity officers reviewing student submissions

- Research editors verifying technical manuscripts

- Publishers auditing contributed content at scale

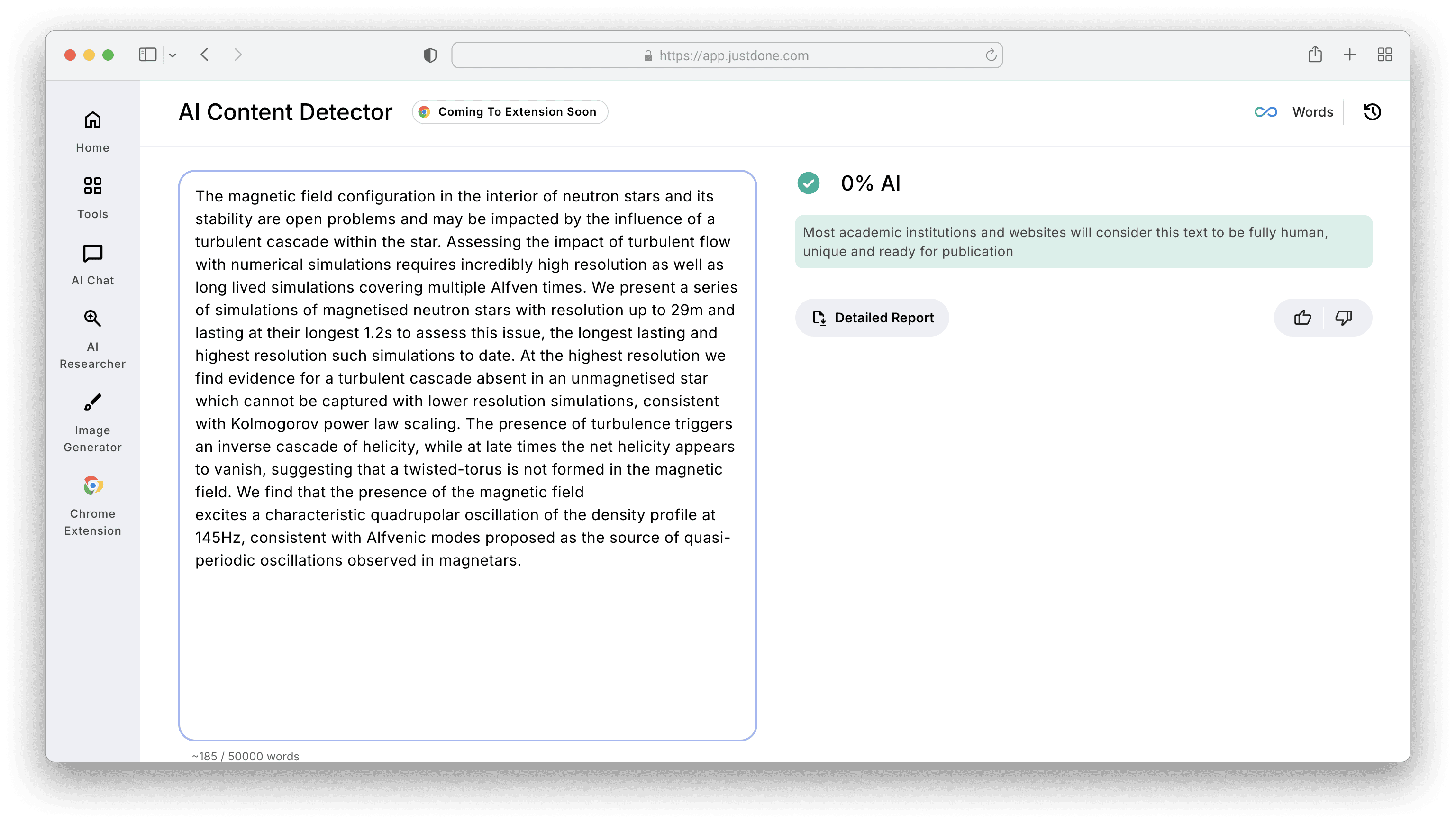

The output of JustDone AI detection for ArXiv text is here:

The 100% accuracy on ArXiv papers is especially notable, as this is the category where competing tools most commonly produce false positives.

Detecting Hybrid AI-Human Text: The Hardest Problem in AI Detection

Pure AI-generated text is increasingly rare. Most AI-assisted writing involves a human who edits, restructures, or rewrites AI-generated drafts.

This hybrid content is the most difficult category for any AI detector because it contains mixed signals: some passages statistically resemble AI, others resemble human writing.

JustDone's hybrid text results:

- Correctly identifies 60% of hybrid texts as partially AI-generated

- Achieved 22.5% partial identification rate in benchmark testing

Few AI detection tools report hybrid performance at all. Providing this metric demonstrates a more realistic picture of what AI detection can and cannot do.

JustDone vs GPTZero vs Copyleaks: Full Comparison

| Detector | AI Detection Rate | Error Rate | Hybrid Detection | Best Use Case |

| JustDone | 80% | 10.3% | 60% | Balanced accuracy, low false positives |

| GPTZero | 70% | 20.5% | Limited data | General use, academic |

| Copyleaks | High (unspecified) | 23.7% | Limited data | Plagiarism + AI combo checking |

Key takeaway: GPTZero and Copyleaks both prioritize aggressive AI detection, which inflates their error rates. JustDone's architecture is tuned for balanced classification — catching more AI text while generating fewer false accusations against human writers.

For contexts where a false positive carries real consequences (academic penalties, editorial rejection, employment decisions), a lower error rate is the metric that matters most.

How to Interpret AI Detector Results Correctly

AI detection tools output probability scores, not definitive verdicts. Even the most accurate detector will be wrong in some cases.

Best practices for responsible interpretation:

- Treat results as a starting point, not a conclusion

- Review the flagged text directly — look for structural or stylistic inconsistencies

- Check revision history where available (Google Docs, Word, CMS logs)

- Cross-reference with a second detector for high-stakes decisions

- Consider domain context — highly structured writing (legal, technical, scientific) naturally resembles AI output

No AI detector should be the sole basis for an accusation or a rejection decision.

FAQ

How accurate is the JustDone AI Detector?

JustDone achieves 80% accuracy on pure AI-generated text with a 10.3% total error rate — lower than GPTZero (20.5%) and Copyleaks (23.7%).

What is a false positive in AI detection?

A false positive occurs when a detector incorrectly classifies human-written text as AI-generated. This is the most harmful type of error in academic and editorial settings.

Can JustDone detect ChatGPT and Claude outputs?

Yes. JustDone is trained on outputs from GPT-4, Claude, Gemini, and other major LLMs.

What is the minimum text length for accurate AI detection?

JustDone requires a minimum of 350 characters for reliable results. Shorter texts lack sufficient linguistic signals for confident classification.

Does JustDone detect AI-edited human text?

JustDone correctly identifies 60% of hybrid AI-human texts — one of the few detectors to benchmark and report this metric.

How does JustDone compare to GPTZero?

JustDone outperforms GPTZero on both detection rate (80% vs 70%) and error rate (10.3% vs 20.5%) in comparative testing.

Conclusion: Is JustDone the Most Accurate AI Detector?

Based on independently structured testing, JustDone delivers a stronger accuracy-to-error-rate balance than other tools across academic, journalistic, and technical writing domains.

The combination of a 10.3% error rate, 80% AI detection accuracy, and 100% accuracy on ArXiv and news datasets makes it a strong choice for contexts where false positives carry real-world consequences.

AI detection is a probabilistic tool, not a certainty engine. But when the stakes are high, the error rate of the detector you choose matters, and JustDone currently holds the benchmark advantage among publicly tested tools.