Key Takeaways:

- ChatGPT generates responses probabilistically, so the same prompt can produce different outputs every time.

- Memory, custom instructions, conversation history, and model version all influence what each user sees.

- JustDone helps make AI-generated content more consistent with AI detection, humanization, and plagiarism checking tools.

Does ChatGPT give different answers to the same question? Yes, it does, every time, to every user. Millions of people type nearly identical prompts into ChatGPT daily and walk away with responses that differ in structure, phrasing, examples, and sometimes substance.

This happens because ChatGPT generates each response probabilistically, token by token, rather than retrieving a stored answer from a database. Temperature settings, memory, custom instructions, and model version all contribute to the variation.

It affects how much you can rely on a single output, how detectable AI-generated content is, and what you can do to get more consistent results.

This article explains why ChatGPT generates different responses for different users, what factors drive the variation, whether you can get the same answer twice, and how to reduce inconsistency when consistency actually matters.

The Short Answer

- No. ChatGPT does not give the same answers to everyone.

- Two people submitting the exact same prompt at the same moment can receive responses that differ in structure, phrasing, length, examples used, and sometimes even factual content. This is true even when everything else appears identical.

- The reasons come down to four factors: the probabilistic architecture that generates each response token by token, temperature settings that control how random the output is, personal context like memory and custom instructions, and the model version each user has access to.

Why Does ChatGPT Give Different Answers to the Same Question?

To understand why responses vary, you need to understand what ChatGPT is actually doing when it generates a reply. It is not looking up a stored answer in a database. It is constructing a response from scratch, one piece at a time.

Next-Token Prediction and Probability

ChatGPT is a large language model that works by predicting the next token (roughly a word or part of a word), given everything that came before it. At each step, the model doesn't pick a single definitive answer. It calculates a probability distribution across thousands of possible next tokens and samples from that distribution.

As OpenAI explained in its 2025 documentation, the model is trained to predict the next word based on the words that came before it.

Think of it like a weighted dice roll. The most likely word has the highest probability, but words further down the list still have a chance of being chosen. The model runs this process hundreds or thousands of times per response or once per token. It means small variations accumulate into meaningfully different outputs.

This is why ChatGPT generates different answers to the same question even when asked back to back in the same session. The randomness is baked into the model’s architecture.

Temperature and Sampling Settings

Temperature is the setting that controls how much randomness goes into each response. It is similar to a dial. Turn it down, and ChatGPT sticks to the most likely, predictable word choices. Turn it up, and it reaches for less obvious options, producing more varied and creative output.

The scale runs from 0 to 2 on most models.

Low temperature (0 to 0.3) works best for precise tasks like data extraction or grammar correction.

Mid-range (around 0.5) suits most writing tasks. Higher settings (0.7 to 1) produce more original responses but also increase the chance of errors or off-topic content.

Not every model supports temperature, and this matters.

If you use ChatGPT through the website or app, you cannot adjust temperature yourself. OpenAI sets it internally for each model. You get whatever they've configured.

If you use the API, it depends on which model you're calling:

- GPT-4o and earlier models let developers set temperature directly. This gives more control over how consistent or creative the output is.

- GPT-5 does not support temperature at all. Passing the parameter returns an error. GPT-5 is a reasoning model that works differently under the hood, and temperature simply isn't part of how it operates. OpenAI's other reasoning models, o1 and o3, work the same way.

One thing that surprises most people: setting the temperature to 0 does not guarantee identical responses every time. Even at 0, small variations appear. This happens because of how GPU math works, how requests get batched on OpenAI's servers, and how some models route requests internally. One researcher ran the same prompt 1,000 times at temperature 0 and got 80 distinct responses. They matched for the first 102 tokens, then started to differ. So temperature explains part of why ChatGPT varies, but most users never touch it. And for GPT-5 users, it is not a factor at all.

Does ChatGPT Generate the Same Responses for Different Users?

Beyond the randomness built into the model itself, several factors create differences between what different users receive.

Memory and Custom Instructions

ChatGPT's Memory feature allows the model to retain information about you across conversations. If you've told ChatGPT that you work in healthcare, prefer concise answers, or are writing for a non-technical audience, those preferences get applied to future responses — even when you start a fresh chat.

Custom Instructions work similarly. OpenAI confirms that custom instructions are considered every time the model responds, meaning two users with different instructions will receive different outputs even from identical prompts.

You can set persistent preferences that shape every conversation: a default writing tone, your role, your goals, topics to avoid. Two users sending the same prompt will get different responses if one has Custom Instructions enabled and the other doesn't.

A prompt like "write me a short explanation of machine learning" will produce a very different result for a user whose Custom Instructions say "I'm a data scientist who prefers technical depth" versus one that says "I teach middle school and need simple, jargon-free language."

Conversation Context

Within a single conversation, every message you've sent shapes what comes next. ChatGPT reads the entire conversation history as context, including the questions you've asked, the answers you've given feedback on, and the style of your writing. The model adapts its style, depth, and framing to match the conversation that's already happened.

This means the same question asked at message three of a conversation will get a different answer than the same question asked at message twenty, even within the same chat window.

Model Version

Not everyone is talking to the same model. Free-tier users typically have access to lighter or older model versions, while Plus and Pro subscribers get access to GPT-4o, GPT-5, and other more capable models. Different model architectures, trained on different data at different times, produce systematically different kinds of responses.

If you need more controlled, predictable outputs, especially for business writing or professional content, use JustDone's AI Chat. It is built to deliver structured, consistent results without the variability that comes from open-ended prompting.

Will ChatGPT Give the Same Answer Twice? Testing Reproducibility

The short answer: ChatGPT almost never gives the same answer twice, and not reliably.

If you open two separate browser windows, paste the exact same prompt into both, and hit enter simultaneously, you will get two different responses. The structure might be similar. The main points might overlap. But the wording, the examples, the order of arguments, and the phrasing of the conclusion will diverge.

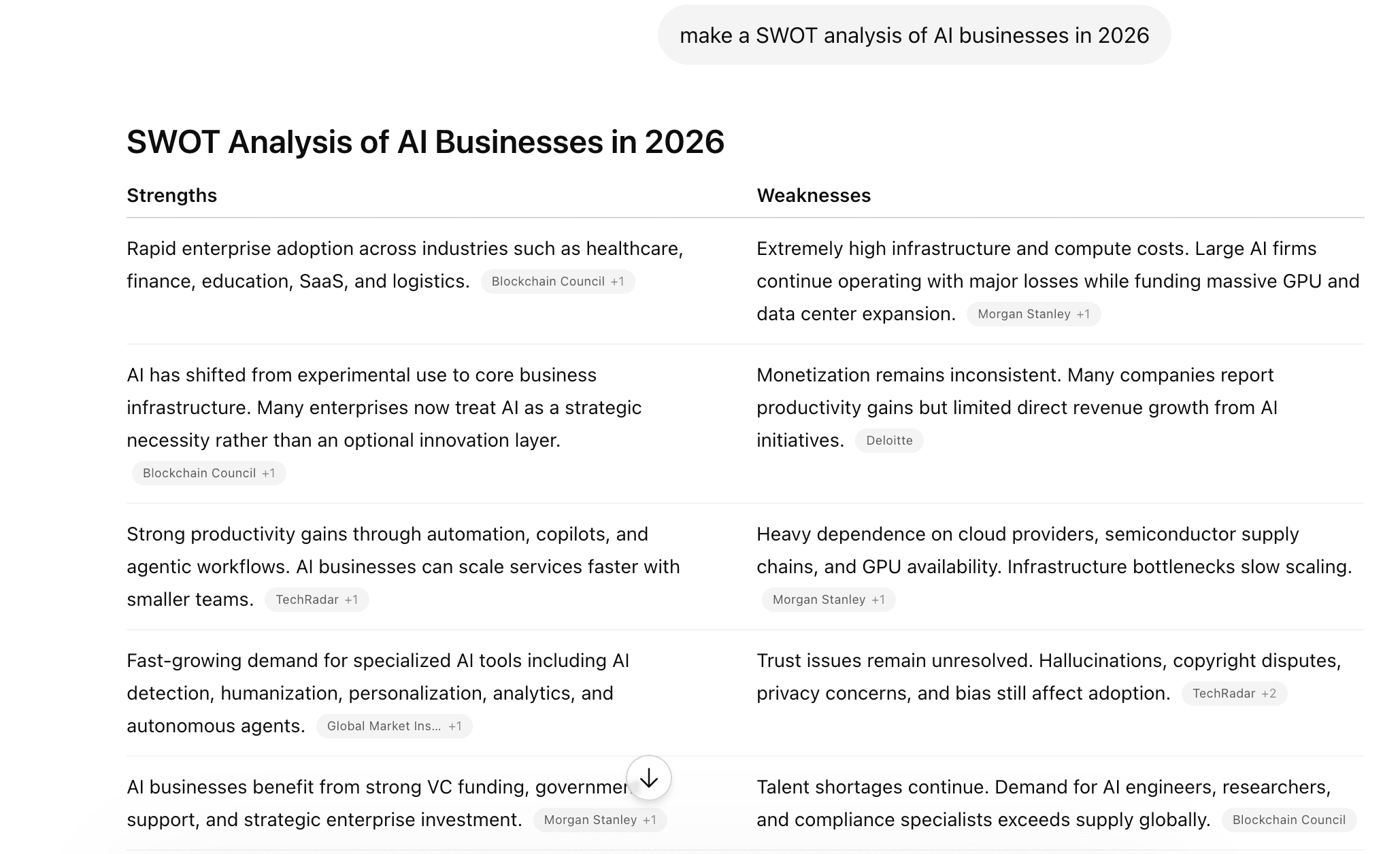

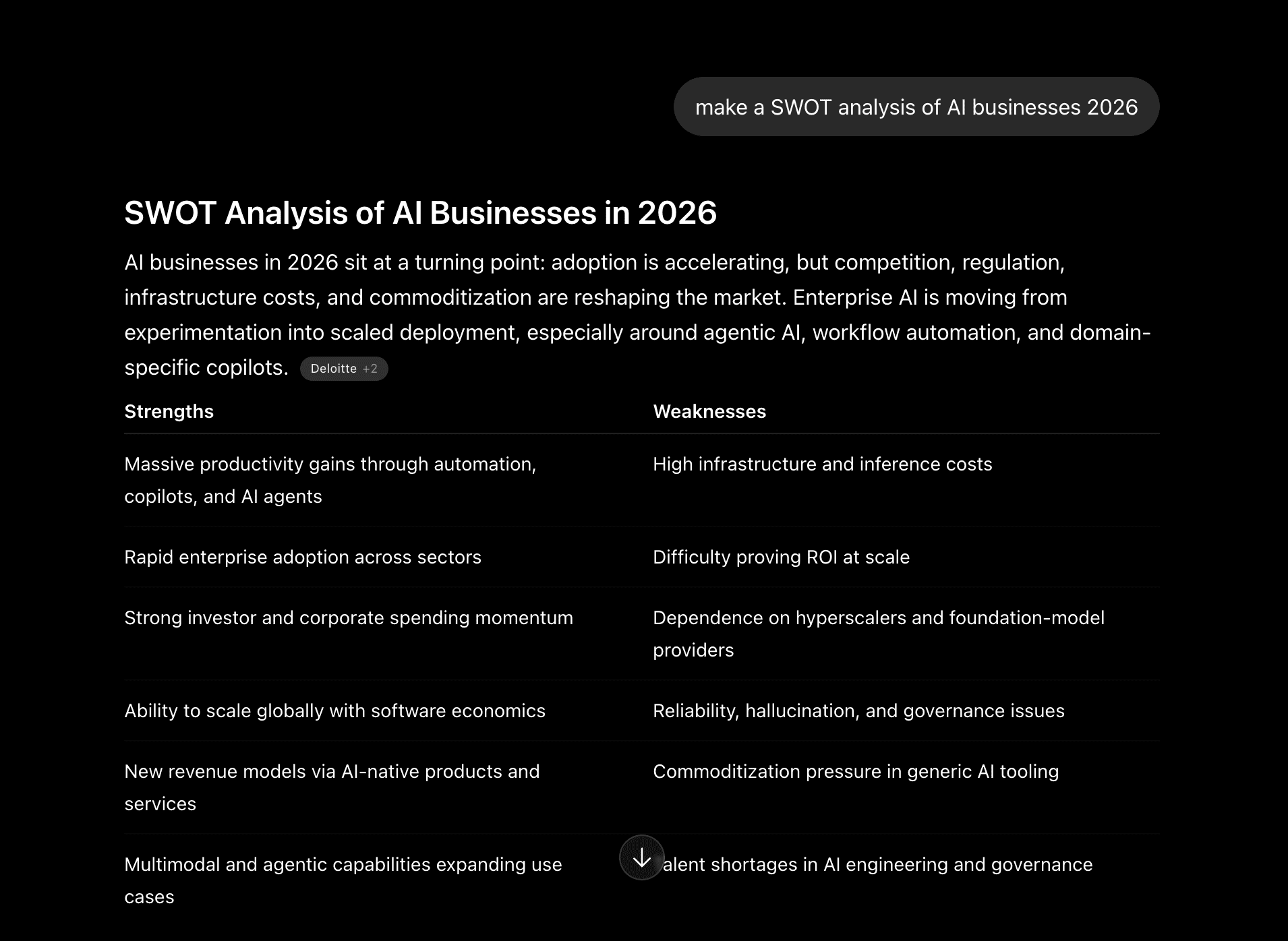

Let’s look at a real example. Here’s the response ChatGPT gave me from one account.

Now compare it to the response generated for the exact same prompt from another account:

The structure is somewhat similar; however, the second output has Intro, while the first starts from the table. Besides, the content in the tables is different.

This variability matters most in two contexts:

- Academic and professional writing. If you're using ChatGPT to help draft content you'll submit or publish, the inconsistency means you can't assume the version you got is the best or most accurate version. Running the output through a detection step is worth doing. Not because ChatGPT is unreliable, but because the variation means quality is not guaranteed. Always run AI-generated content through an AI Detector before publishing, especially for academic or professional use.

- Collaborative work. If two people on a team both ask ChatGPT the same strategic question and act on the answers independently, they may be working from meaningfully different responses. The illusion of consistency can create real coordination problems.

How to Get More Consistent Answers From ChatGPT

Variation is a feature, not a flaw. But it's a feature that becomes a problem when you need reproducibility. Here's how to work with it.

Write Specific, Layered Prompts

Vague prompts produce variable outputs because the model has more degrees of freedom in how to interpret them. A prompt like "explain machine learning" could reasonably be answered in hundreds of different ways. A prompt like "explain machine learning in three paragraphs for a non-technical HR director making a hiring decision about AI roles" has much less room for interpretation.

The more constraints you add (length, audience, format, tone, specific points to cover), the more consistent your outputs will be.

Provide Context Up Front

Start each conversation by giving ChatGPT the context it needs to calibrate its response. Who are you? What's the goal of this content? Who's the audience? What format do you need? This front-loading reduces variation because the model spends less time making assumptions and more time executing your specific request.

Use Custom Instructions Strategically

If you use ChatGPT regularly for a specific type of work, set Custom Instructions that lock in your preferences. Define your default audience, your preferred output length, your tone, and any constraints that apply to most of what you do. This narrows the solution space the model works within, producing more consistent results across sessions without requiring you to re-explain your context every time.

Verify and Humanize the Output

Regardless of how consistent your prompting is, AI-generated text requires a review pass.

- After generating content, polish it with JustDone's AI Humanizer to make it read naturally and reduce detection risk.

- Run final drafts through a Plagiarism Checker to ensure originality, especially when combining outputs from multiple prompts.

- For students and researchers, AI Homework Helper gives structured, more predictable academic support.

Common Myths About ChatGPT Answers

Here are the most popular myths about how ChatGPT generates the outputs:

1. ChatGPT gives the exact same essay to everyone

This is the assumption that gets students into trouble. Two people submitting the same essay prompt to ChatGPT will not get the same essay. The structure, examples, thesis framing, and concluding arguments will differ. However, the outputs will often share similar arguments, similar paragraph structures, and similar phrasings drawn from the same underlying training data.

2. If I'm polite, ChatGPT gives better answers

Partially true, but not for the reason most people think. Polite prompts tend to be more structured, because people who ask "Could you please write a summary of X that covers Y and Z, suitable for W?" provide more useful constraints than people who simply ask "summarize X." The structure does help. The politeness itself doesn't.

3. People can't tell if you use ChatGPT

This underestimates how far detection technology has come. AI-generated text carries statistical fingerprints — characteristic patterns in word choice, sentence length distribution, and structural consistency that are distinct from how humans write.

JustDone's AI Detector identifies AI-generated text with high accuracy, picking up these patterns even after light editing. If you've used ChatGPT to write something you're presenting as your own, the assumption that it's undetectable is not a safe one. Reliable AI detection means a lot, especially when you work in an academic, professional, or publishing context.

Stop Wrestling With Inconsistent AI Output

The variability in ChatGPT's responses is a natural consequence of how probabilistic language models work, and it's often useful. But when you need consistent, reliable, and publication-ready content, working with raw ChatGPT output isn't the most efficient path.

JustDone is built for the full workflow: generating structured drafts, detecting AI patterns, humanizing outputs, and verifying originality. This is all possible without switching between tools. Whether you're a student finishing an assignment, a professional producing business content, or a creator publishing regularly, the combination of generation and verification in one place saves time and reduces risk.

Obviously, ChatGPT can’t give the same answers to everyone. The question is what you do with the output once you have it.